Keywords: Seven-layer AI agent architecture, foundation models, agent frameworks, multi-agent systems, RAG (retrieval-augmented generation), state management, CrewAI, AutoGen, LangGraph, LlamaIndex, AutoGPT, computer-use agents, Claude, OpenAI

Chapter 1 explored the rise of AI agents—their origins, momentum, and potential to reshape industries, work, and our understanding of intelligence. But vision alone isn’t enough. Turning promise into reality requires the right tools and frameworks.

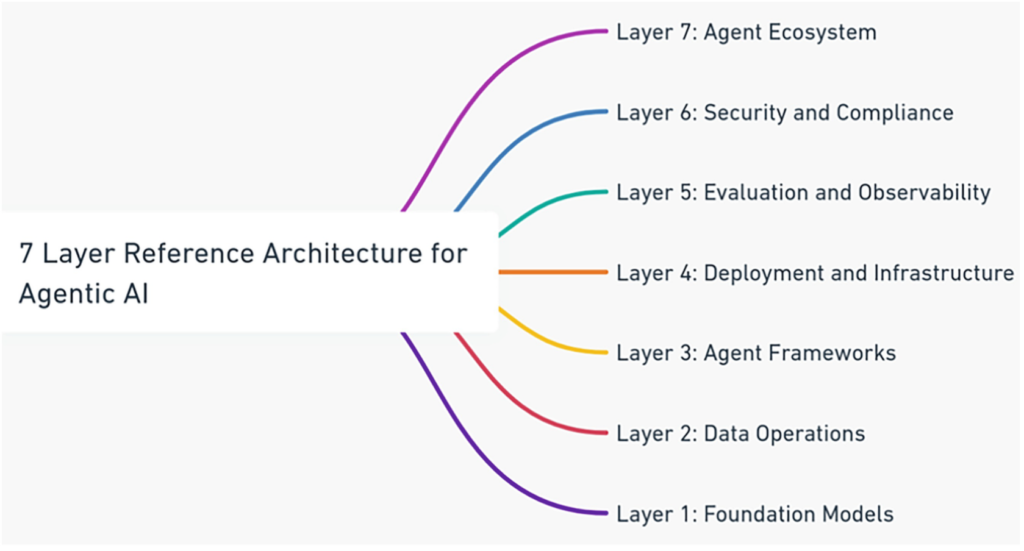

This chapter shifts from why AI agents matter to how they’re built. Using the Seven-Layer AI Agent Architecture, we break down the key components behind real-world agents—from foundational models to deployment ecosystems. We also compare leading frameworks like AutoGen, LangGraph, LlamaIndex, and AutoGPT, while addressing critical challenges such as state management, security, scalability, and data quality. Think of this chapter as a practical guide for building—and shaping—the future of AI agents.

Table of Contents

1. The Seven-Layer AI Agent Architecture

To make sense of the rapidly evolving AI agent landscape, you can use the Seven-Layer AI Agent Architecture as a practical mental model for understanding how modern AI agents are designed, built, and deployed.

This layered approach decomposes a complex and often confusing ecosystem into distinct functional layers, each with a clear responsibility. By separating concerns and abstracting complexity, the architecture helps you reason about tools, frameworks, and infrastructure choices in a structured and scalable way.

The layers are interconnected, with each one building on the capabilities of the layer beneath it. At the base, Layer 1: Foundation Models provide the core intelligence—reasoning, language understanding, and multimodal capabilities—that power everything above. Layer 2: Data Operations ensures that agents can ingest, retrieve, and manage data effectively, enabling techniques such as retrieval-augmented generation (RAG) and more advanced Agentic RAG workflows. Together, these two layers give agents both intelligence and context.

On top of this foundation, Layer 3: Agent Frameworks allow you to turn models and data into functioning agents. These frameworks manage state, memory, tool use, and decision-making, making it easier to build complex, multi-step workflows. Layer 4: Deployment and Infrastructure then ensures that these agents can run reliably at scale. Cloud platforms, containers, CI/CD pipelines, and emerging agent-hosting services handle availability, performance, and cost efficiency, allowing you to move from prototypes to production systems.

As agents become more autonomous, visibility and control become essential. Layer 5: Evaluation and Observability helps you measure how well agents perform, how much they cost, and whether they behave safely and reliably in real-world conditions. This includes performance benchmarks, safety evaluations, and observability tools that let you trace agent behavior, detect anomalies, and continuously improve system quality.

Layer 6: Security and Compliance acts as a cross-cutting layer that spans the entire architecture. Rather than being an afterthought, security, privacy, and regulatory compliance must be embedded into every layer—from secure model usage and data handling to infrastructure hardening and ecosystem-level access controls. Thinking of security as a vertical concern helps you design agent systems that are trustworthy, auditable, and suitable for regulated environments.

Finally, Layer 7: Agent Ecosystem is where AI agents deliver real value. This is the layer where agents integrate with business applications, workflows, and existing enterprise systems, and where marketplaces, SDKs, and developer communities emerge. At this level, the complexity of the underlying stack is hidden, allowing end users to interact with agents through intuitive, scalable applications that solve real problems.

Taken together, these seven layers give you a coherent framework for building, evaluating, and scaling AI agents responsibly. In the sections that follow, you’ll explore each layer in more detail—starting from the Agent Ecosystem at the top and working down to the foundation models that power it all.

2. Features and Comparison of AI Agent Frameworks

In this section, we explore the core features of several leading AI agent frameworks, compare how they differ across key dimensions, and learn how to choose the right one for your development needs. While the agent ecosystem is evolving quickly and no single framework fits every use case, understanding their design philosophies and trade-offs will help you make more informed architectural decisions.

The discussion focuses primarily on AutoGen, LangGraph, LlamaIndex, and AutoGPT, as they represent four distinct approaches to building agents: multi-agent collaboration, graph-based workflows, data-centric agents, and autonomous goal-driven systems. That said, it’s important to recognize that the ecosystem extends well beyond these tools. Frameworks such as Haystack, CrewAI, SuperAGI, AgentVerse, and Hugging Face’s Smol Agents each bring unique strengths and may be better suited for specific scenarios. When selecting a framework, you should always consider the broader landscape rather than limiting yourself to a single shortlist.

2.1 Key Features of Selected Agent Frameworks

To ground the comparison, you can start by examining the defining characteristics of each framework.

AutoGen

AutoGen, developed by Microsoft, is designed for multi-agent collaboration. It allows you to create systems where multiple agents—each with distinct roles—interact through structured conversations to solve complex problems. For example, you might combine a planner agent, a code-writing agent, and a reviewer agent to collaboratively build software.

One of AutoGen’s strengths is its flexibility in execution. You can run code locally, inside Docker containers for isolation and security, or operate purely in a no-code, language-based mode. This makes AutoGen suitable for everything from lightweight experimentation to more robust enterprise workflows. If your use case involves interactive development, collaborative reasoning, or human-in-the-loop feedback, AutoGen offers a powerful and expressive foundation.

LangGraph

LangGraph, developed by LangChain, takes a more structured and deterministic approach. Instead of conversations, you model agent behavior as a graph made up of nodes, edges, and shared state. Nodes represent agents or processing steps, edges define transitions, and the state explicitly tracks context as it flows through the system.

This graph-based design gives you fine-grained control over execution, making LangGraph particularly well suited for workflows that require branching logic, cycles, or iterative refinement. If you need to debug complex decision paths or ensure predictable behavior in production systems, LangGraph’s explicit structure can be a major advantage.

LlamaIndex

LlamaIndex is fundamentally a data-centric agent framework. Its primary focus is on connecting large language models to external knowledge sources through robust ingestion, indexing, and retrieval pipelines. Rather than emphasizing autonomy or orchestration, LlamaIndex excels at helping agents find the right information at the right time.

With its query engines and chat engines, you can build agents that handle complex, multi-turn questions grounded in large datasets. LlamaIndex also supports event-driven, multi-agent collaboration and allows agents to treat entire datasets as callable tools. If your agent’s value depends heavily on accurate, explainable data retrieval, LlamaIndex is often the best place to start.

AutoGPT

AutoGPT represents a more autonomous, goal-driven vision of AI agents. Given a high-level objective, you allow the agent to plan, execute, and iterate on tasks with minimal human intervention. AutoGPT includes long-term and short-term memory, internet access, file handling, and code execution, enabling it to operate independently over extended periods.

This autonomy makes AutoGPT compelling for exploratory tasks such as research, analysis, or repetitive workflows. However, it also introduces challenges around transparency, control, and reliability, which you’ll need to manage carefully in production settings.

2.2 Comparative Analysis

Looking at these frameworks side by side helps clarify when each one makes sense.

State Management

State management determines how context is preserved as an agent operates. AutoGen distributes state across agents engaged in conversation, enabling diverse perspectives but making global reasoning more implicit. LangGraph centralizes state within its graph, giving you explicit control over transitions and making debugging easier. LlamaIndex embeds state primarily within its indexing and retrieval mechanisms, while AutoGPT relies on memory systems that persist context across tasks and time.

Tool Integration

All four frameworks support tool usage, but in different ways. AutoGen allows agents to call Python tools and execute code in flexible environments. LangGraph treats tools as nodes within the workflow, which makes complex tool chains easy to reason about. LlamaIndex shines by turning data sources into tools through query engines, while AutoGPT dynamically creates and executes tools via code—powerful, but potentially risky without safeguards.

Decision-Making Logic

Decision-making in AutoGen is collaborative and role-based, coordinated through group conversations. LangGraph encodes decisions directly into the graph structure using conditional edges. LlamaIndex focuses its “decisions” on selecting the most relevant information to retrieve. AutoGPT, by contrast, autonomously plans and executes actions to achieve a goal, offering flexibility at the cost of predictability.

Data Handling

If data is central to your application, LlamaIndex stands out with its connectors, indexing strategies, and retrieval performance. AutoGPT includes basic web and file handling, while AutoGen and LangGraph rely more heavily on external integrations you define yourself.

Observability and Evaluation

Observability becomes increasingly important as agents grow more autonomous. LangGraph’s visual workflows provide natural transparency. AutoGen logs interactions and supports human oversight. LlamaIndex offers metrics around retrieval quality. AutoGPT provides action logs and reasoning traces, but its autonomy can make real-time monitoring more difficult.

2.3 Other Notable Frameworks

Beyond the main four, you may also encounter tools like BabyAGI for rapid prototyping, CrewAI for human–AI collaboration, MemGPT for long-term memory use cases, SuperAGI for enterprise-scale agent management, and Camel for highly customizable workflows. Each addresses a different slice of the problem space, reinforcing the idea that framework choice should be driven by use case rather than popularity.

2.4 Choosing the Right Framework

Ultimately, there is no “best” AI agent framework—only the one that best fits your requirements. You should consider factors such as the level of autonomy you need, how critical data retrieval is, whether workflows must be deterministic, and how much observability and control your application demands.

As AI agent frameworks continue to evolve, you can expect deeper support for memory, multi-agent coordination, evaluation, and enterprise integration. Keeping a flexible mental model—and revisiting your framework choices as the ecosystem matures—will be key to building resilient and future-proof agent systems.

3 Challenges You May Face When Using AI Agent Frameworks

While AI agent frameworks unlock powerful new capabilities, adopting them in real-world organizations is rarely straightforward. As you move from experimentation to production, you’re likely to encounter challenges that span technical, operational, and strategic dimensions. These issues range from tooling limitations and system integration to security, compliance, talent shortages, and cost control.

Understanding these challenges early allows you to plan mitigation strategies proactively rather than reactively. Below are eight common challenge areas you may face when implementing and scaling AI agents—and why they matter.

3.1 Framework and Tooling Limitations

AI agent frameworks evolve rapidly, and keeping up can be difficult if your organization lacks dedicated technical resources. You may find yourself working with outdated configurations, incomplete features, or unstable APIs that limit agent performance. In some cases, documentation is sparse or community support is still maturing, forcing your team to spend excessive time troubleshooting or reinventing basic functionality.

To mitigate this, you’ll need to invest in internal platform ownership or partner with vendors that offer managed support. Treating agent tooling as a first-class platform—rather than a side experiment—helps ensure upgrades, fixes, and improvements don’t disrupt critical workflows.

3.2 Integration Challenges

AI agents rarely operate in isolation. In practice, you’ll need them to integrate with CRMs, ERPs, databases, cloud services, and internal tools. This is often harder than expected. Differences in APIs, data schemas, authentication methods, and communication protocols can turn integration into a major bottleneck.

Legacy systems present an additional hurdle, as they often lack modern interfaces required for seamless connectivity. Without careful design, you may accumulate technical debt and brittle integrations. To unlock the full value of AI agents, you’ll need standardized interfaces, robust middleware, and well-defined integration patterns that allow agents to exchange data reliably across systems.

3.3 Scalability Challenges

As AI agents take on more responsibility, scalability becomes a critical concern. Frameworks designed for orchestration and autonomy—such as SuperAGI or multi-agent systems—can place heavy demands on compute, storage, and networking resources. Scaling these systems is technically complex and often expensive.

While cloud platforms offer elastic scaling, costs can rise quickly as usage grows. Latency can also increase as more agents and data are introduced. To scale sustainably, you’ll need thoughtful architecture choices, including load balancing, efficient task scheduling, optimized inference strategies, and clear limits on agent autonomy.

3.4 Security Challenges

AI agents frequently interact with sensitive data, external tools, and critical systems, which makes them attractive targets for abuse. Without strong safeguards, you risk data leakage, prompt injection, unauthorized access, or unintended actions performed by autonomous agents.

Security must be designed in from the beginning—not bolted on later. This includes access controls, sandboxed execution, audit logging, and continuous monitoring. As discussed elsewhere in this book, agent security is not just a technical issue but an organizational one.

3.5 Compliance Challenges

If your agents handle personal, financial, or medical data, compliance is unavoidable. Regulations such as the EU AI Act, GDPR, HIPAA, and emerging national standards place strict requirements on data handling, transparency, and accountability.

Agents with memory capabilities can unintentionally retain sensitive information if not properly governed. To stay compliant, you’ll need clear AI governance practices, including regular audits, data anonymization, retention policies, and access controls. Lifecycle management—covering creation, operation, and retirement of agents—is essential to reduce regulatory risk and maintain trust.

3.6 Data Quality Challenges

AI agents are only as good as the data they rely on. Poor-quality data can lead to incorrect decisions, unreliable behavior, and erosion of user trust. This is especially problematic for agents that perform reasoning, retrieval, or autonomous planning.

Common issues include data silos, inconsistent formats, outdated content, and unreliable third-party sources. To address this, you should invest in strong data foundations: standardized preprocessing pipelines, centralized data access, automated quality checks, and ongoing monitoring. High-quality data directly translates into more reliable and effective agent behavior.

3.7 Skilled Workforce Constraints

Building and maintaining AI agents requires expertise across machine learning, software engineering, infrastructure, and data management. Skilled professionals in these areas are scarce, and competition for talent is intense.

Without the right skills, you risk underutilizing your agent frameworks or deploying systems that are fragile and difficult to maintain. To close this gap, you may need a combination of internal training, external partnerships, and adoption of low-code or developer-friendly frameworks that reduce dependence on highly specialized expertise.

3.8 Cost Management Challenges

AI agents introduce new cost drivers: LLM API usage, infrastructure, software licenses, integration work, and skilled labor. At scale, these costs can grow rapidly and unpredictably.

To keep AI agents financially viable, you’ll need active cost management strategies. These may include model tiering (using smaller models for routine tasks and larger ones selectively), optimizing prompts and workflows, leveraging open-source tools, and considering hybrid or on-prem deployments where appropriate. Cost awareness must be built into both technical design and operational governance.

4 Summary

This chapter moves beyond the what and why of AI agents to focus on the how. It provides a practical foundation for building real-world agent systems through the Seven-Layer AI Agent Architecture, offering a structured way to understand the components, trade-offs, and dependencies involved.

Key Takeaways

- A Holistic Architectural Model

The Seven-Layer Architecture gives you a shared mental model for designing, implementing, and analyzing AI agent systems. By breaking complexity into manageable layers, it enables clearer communication, better design decisions, and more scalable implementations. - Framework Trade-offs Matter

Comparing frameworks such as AutoGen, LangGraph, LlamaIndex, and AutoGPT highlights that no single tool fits every use case. Understanding differences in state management, tool integration, autonomy, and observability helps you choose the right framework for your specific goals. - Emerging Agent Capabilities Are Expanding Fast

Developments like Computer Use Agents and Agentic RAG signal a shift toward more autonomous, adaptable systems that can interact directly with digital environments and complex workflows. - Security and Compliance Are Non-Negotiable

Security and compliance must be embedded across every layer of the agent stack. A security-first mindset is essential to building trustworthy, auditable, and responsible AI agents. - Challenges Are Opportunities for Maturity

The challenges you face—technical, organizational, and economic—are not signs of failure but signals of a maturing field. By addressing them deliberately, you can build more resilient systems and develop sustainable AI agent strategies.

Taken together, this chapter equips you with both a conceptual framework and a practical lens for navigating the rapidly evolving world of AI agents—preparing you to design systems that are not only powerful, but also scalable, secure, and responsible.