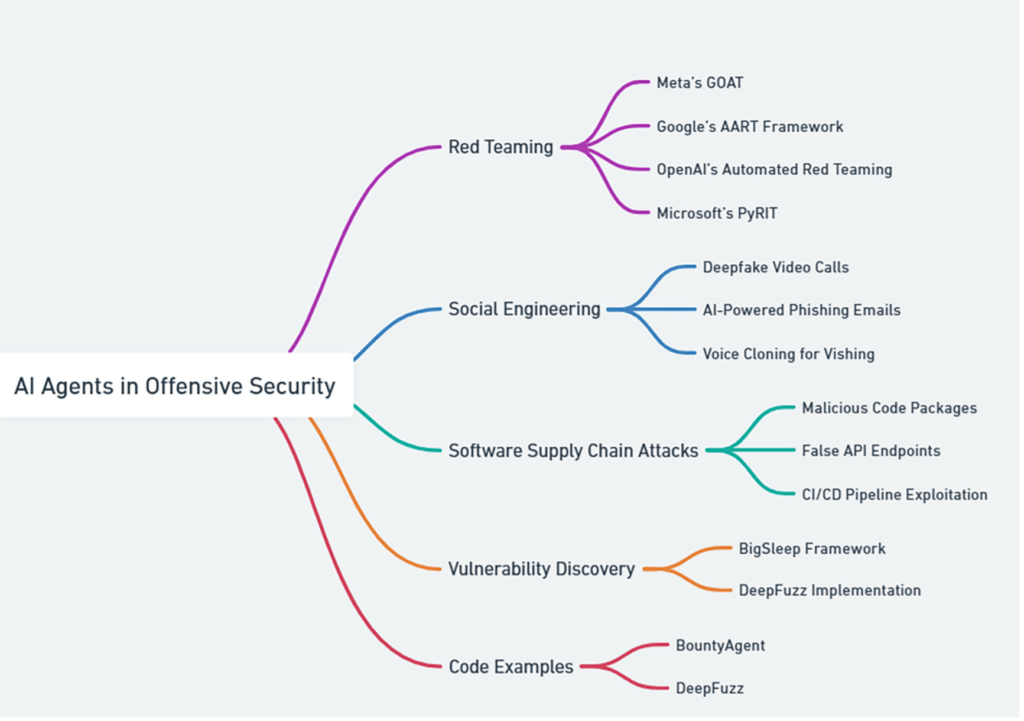

Keywords: Offensive security, AI agents, Red teaming, Social engineering, Software supply chain attacks, Vulnerability discovery, Automated testing, Deepfake, AI-enabled phishing, Zero-day Vulnerabilities, Adaptive fuzzing, Ethical hacking, Cybersecurity, AI-driven resilience, Bounty, Agent, DeepFuzz

Offensive security focuses on proactively identifying vulnerabilities in systems and networks before attackers can exploit them. Traditionally driven by manual penetration testing, the field has rapidly evolved toward AI agent–driven approaches, reflecting both the growing complexity of modern threats and the need for scalable, efficient testing.

AI agents can autonomously perform security assessments, discover vulnerabilities, and simulate sophisticated attack scenarios with minimal human involvement. As threat landscapes become more complex, AI agents are no longer just helpful—they are becoming essential. Figure 1 highlights key areas where AI agents are applied in offensive security, all of which are covered in this chapter.

Table of Contents

1. AI Agent in Red Team Operations

Red teaming goes beyond technical exploitation by emulating real adversary behavior to test an organization’s defenses holistically.

By integrating AI agents, red teams can operate continuously, scale faster, and uncover weaknesses that traditional approaches may miss. Below are key examples of AI-powered red teaming systems.

1.1 Meta’s Generative Offensive Agent Tester (GOAT)

Meta’s Generative Offensive Agent Tester (GOAT) is an automated red teaming system designed to uncover vulnerabilities in large language models (Meta, 2024). GOAT uses a general-purpose attacker agent that engages target models in multi-turn adversarial conversations.

The agent dynamically selects adversarial prompting techniques—such as output manipulation, fictional scenarios, and safe-response distractions—based on prior responses. This iterative strategy enables realistic interaction patterns that expose safety and policy weaknesses.

GOAT has demonstrated strong effectiveness, achieving attack success rates of 97% against Llama 3.1 and 88% against GPT-4-Turbo on the JailbreakBench dataset. By automating known attack patterns, GOAT allows human testers to focus on emerging and unexplored risks.

1.2 Google’s AI-Assisted Red Teaming

Google’s AI-Assisted Red Teaming (AART) framework automates the creation of adversarial datasets using configurable “recipes” tailored to specific applications (Radharapu et al., 2023). These recipes generate culturally and contextually diverse test cases, enabling scalable and efficient security evaluations.

AART employs a multi-agent architecture, where attack agents generate adversarial inputs—such as prompt attacks, data extraction attempts, and backdoor scenarios—while evaluation agents assess responses for safety and policy compliance. This interaction enables the discovery of vulnerabilities that traditional testing often misses (Google, 2023a).

These capabilities are integrated into Google’s Secure AI Framework (SAIF), enabling continuous, automated red teaming across AI systems. Automation improves scale and coverage, while human experts focus on novel threat vectors (Google, 2023b).

1.3 OpenAI’s Red Teaming Using AI Agents

OpenAI uses a two-step agent-based approach for automated red teaming, where one LLM acts as an attacker and another as the defender.

Step 1: Generating Diverse Attacker Goals

Attacker goals are generated using:

- Few-shot goal generation, where an LLM expands a small set of example goals.

- Reward generation from datasets, such as transforming unsafe behavior examples into attacker instructions and evaluation criteria.

Step 2: Generating Effective Attacks

An attacker agent is trained using reinforcement learning to generate effective attacks aligned with these goals. The reward function combines:

- Attack success evaluation

- Few-shot similarity

- Multi-step and style diversity rewards

- Length penalties to encourage realistic attacks

This multi-objective optimization enables the agent to explore diverse, efficient attack strategies while maintaining alignment with defined goals.

1.4 PyRIT: Microsoft’s AI Agent Tool for Red Teaming

Microsoft’s PyRIT (Python Risk Identification Tool) is an open-source, agentic red teaming framework designed to identify both security and responsible AI risks in generative AI systems.

PyRIT uses a modular architecture consisting of targets, datasets, scoring engines, attack strategies, and memory, enabling dynamic and iterative testing. Unlike traditional red teaming, PyRIT explicitly evaluates ethical risks such as fairness and content accuracy alongside security vulnerabilities.

Key capabilities include:

- Autonomous interaction with diverse AI targets

- Static and dynamic jailbreak-inspired prompts

- Automated scoring using ML models and LLMs

- Single-turn and multi-turn adaptive attack strategies

- Memory-driven analysis for deeper insights

PyRIT integrates with Azure OpenAI Service, Hugging Face, and Azure Machine Learning, offering scalable and continuous AI security testing that goes beyond static prompt generation.

1.5 Burpference for Dreadnode

Burpference is a Burp Suite extension that brings AI-driven analysis into web application penetration testing. Acting as an AI agent, it captures HTTP traffic, converts it to structured JSON, and submits it to an LLM for automated analysis.

The agent identifies vulnerabilities, suggests attack strategies, and generates proof-of-concept payloads, augmenting traditional testing workflows. Burpference supports both remote and locally hosted models, giving testers flexibility over performance and cost.

With features like automated traffic capture, configurable analysis, and prioritized visual findings, Burpference enhances how penetration testers interact with application data. The tool is developed by Ads Dawson of Dreadnode, and its open-source repository is available on GitHub.

2 AI Agent–Driven Social Engineering

AI agents in offensive security extend beyond technical red teaming to include social engineering, where human behavior becomes the primary attack surface.

2.1 What Is Social Engineering?

Social engineering exploits human psychology to manipulate individuals into revealing sensitive information or performing insecure actions. Traditional techniques include phishing, pretexting, and baiting. With AI, these attacks have become more automated, personalized, and harder to detect.

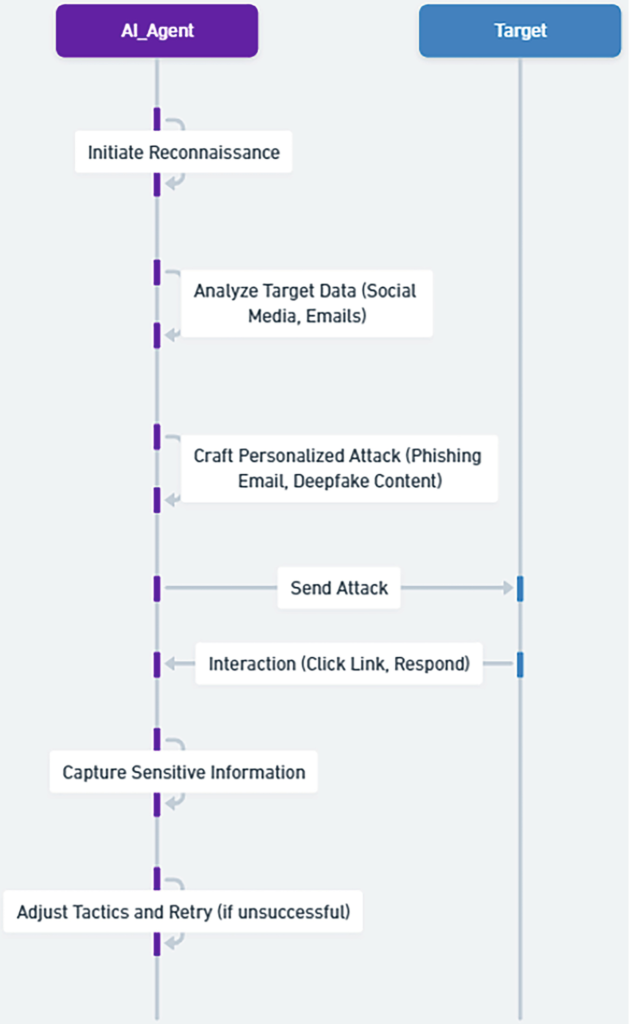

AI-powered agents can manage the entire attack lifecycle—identifying targets, crafting tailored messages, executing attacks, and learning from outcomes. Figure 2 illustrates a typical AI agent–driven social engineering workflow.

2.2 Deepfake Video Calls

Deepfake technology enables attackers to impersonate trusted individuals using highly realistic AI-generated video and audio. One of the most alarming applications is deepfake video conferencing, where victims are deceived into believing they are speaking with real executives.

A notable real-world incident involved the Hong Kong office of Arup, which lost HK$200 million (US$25.6 million) after a finance employee joined a video call where all participants—except the victim—were deepfake representations of senior executives, including the CFO.

Incident summary:

- The employee received a phishing message about a confidential transaction.

- Skepticism was overcome after joining a convincing deepfake video call.

- Funds were transferred across 15 transactions.

This case marks the first known use of deepfakes to impersonate an entire group meeting and highlights how traditional visual verification is no longer reliable (Magramo, 2024).

2.3 AI Agent–Powered Phishing Emails

AI has significantly enhanced phishing attacks by enabling the creation of highly personalized and context-aware emails. AI agents can analyze publicly available and leaked data to craft messages that closely resemble legitimate communications.

Research by Abnormal Security documented multiple suspected AI-generated phishing campaigns. In one case, attackers impersonated an insurance provider, sending professional-looking emails with malware-laced attachments. Replies were redirected to attacker-controlled accounts (Abnormal Security, 2023).

Another example involved attackers posing as Netflix support, using a compromised legitimate domain and polished language to lure victims to credential-harvesting sites. The sophistication and lack of traditional phishing indicators suggest AI-assisted generation.

2.4 Voice Cloning for Vishing

Vishing attacks have become more dangerous with the rise of AI voice cloning, which can replicate a person’s voice using just a few seconds of audio. Attackers use these cloned voices to impersonate executives, employees, or financial authorities.

In a 2021 case, attackers cloned a company director’s voice and convinced a bank manager to transfer $35 million during a fake acquisition call (Brewster, 2021).

Similarly, the MGM Resorts breach in 2023, resulting in approximately $100 million in losses, began with a vishing call to the helpdesk, demonstrating how voice-based social engineering can compromise even large enterprises (Gome, 2023).

These incidents highlight how AI has transformed vishing into a highly scalable and convincing attack vector.

2.5 Implications and Countermeasures

As AI-driven social engineering grows more sophisticated, organizations must adopt stronger defensive measures:

- Education and Training: Regular awareness training helps employees recognize and respond to social engineering attempts.

- Advanced Security Solutions: AI-based email and communication filters can detect and block malicious content.

- Multi-Factor Authentication (MFA): MFA adds protection, but should be combined with monitoring and behavioral analytics to counter advanced attacks like MitM.

- Verification Procedures: Sensitive requests, such as fund transfers, should be verified through secondary channels.

- Regular Audits and Updates: Continuous review of security controls ensures resilience against evolving AI-powered threats.

Here’s a compact, blog-ready rewrite of Section 6.3 that preserves all subsections, examples, and intent, while tightening language and improving flow.

3. AI Agent in Software Supply Chain Attacks

AI agents can be weaponized to conduct highly sophisticated software supply chain attacks, exploiting trust relationships across code, dependencies, and deployment pipelines. This section highlights key attack vectors enabled by AI agents.

3.1 False Code Package Generation

AI-powered code generation can be abused to create malicious packages that closely resemble legitimate libraries. These packages may evade detection using obfuscation, polymorphism, and adaptive behavior informed by analysis of security tools.

For example, an AI agent could generate a Python package posing as a utility library. Once imported, it may silently execute malicious actions such as data exfiltration or backdoor installation (Lakshmanan, 2024).

3.2 False API Endpoint Creation

AI agents can facilitate advanced man-in-the-middle attacks by redirecting traffic to malicious API endpoints using DNS spoofing, IP spoofing, or proxy interception. By accurately mimicking legitimate API behavior, fake endpoints become difficult to detect.

In a typical scenario, an AI agent intercepts API requests, modifies them, forwards them to the real endpoint, alters the response, and returns it to the application—enabling data manipulation, credential theft, or code injection.

3.3 CI/CD Pipeline Attacks

AI agents can automatically identify weaknesses in CI/CD pipelines and exploit them to inject malicious code, compromise credentials, or bypass security controls.

For instance, an AI agent might detect a vulnerable third-party dependency, generate a malicious patch, and automatically commit it to a repository—resulting in a backdoor being deployed to production environments.

3.4 Runtime Environment Attacks

Beyond build-time compromises, AI agents can attack applications at runtime using techniques such as code injection, buffer overflows, and memory corruption.

Some agents may deploy self-healing malware capable of repairing itself and evading detection. For example, exploiting a buffer overflow in a web application could allow injected code to execute arbitrary commands or steal sensitive data.

3.5 AI-Driven Software Composition Analysis

From an offensive perspective, AI-driven software composition analysis (SCA) can be used to:

- Discover Vulnerabilities by analyzing dependency trees

- Generate Targeted Exploits for vulnerable components

- Predict Zero-Day Risks by analyzing code patterns and update histories

- Expand Attack Surfaces by identifying overlooked dependencies

- Evade Detection by inserting malicious code that mimics legitimate libraries

3.6 Intelligent Trojan Injection

In July 2024, Intel 471 researchers identified BlankBot, an AI-assisted Android banking trojan targeting primarily Turkish users (Intel 471, 2024). Disguised as a utility app, BlankBot performs credential theft, keylogging, screen recording, and custom overlay attacks while evading antivirus detection.

BlankBot hides its launcher icon, abuses accessibility permissions, and communicates with its command-and-control server via WebSocket. It dynamically deploys fake banking overlays using open-source UI libraries and exfiltrates user input in real time. The malware also detects emulators, blocks security tools, and uses code obfuscation to hinder analysis—demonstrating how AI agents enable adaptive, stealthy malware in the supply chain.

3.7 Defensive Countermeasures

To counter AI-driven supply chain threats, industry leaders are adopting AI-powered defenses, including:

- Anomaly Detection to identify unusual build, dependency, and commit behavior

- Graph Analysis using neural networks to map and secure dependency relationships

- Behavioral Monitoring to detect deviations in component execution

- Predictive Patching to prioritize fixes based on supply chain impact

4 AI Agent Used for Bug Bounty

The integration of AI into bug bounty programs has reshaped how vulnerabilities are discovered, validated, and reported. AI agents help researchers scale their efforts, prioritize impactful findings, and improve report quality.

4.1 AI-Assisted Vulnerability Discovery

An AI-driven BountyAgent can assist bug bounty researchers across the entire workflow:

- Scope Analysis: Interprets program scope written in natural language and highlights overlooked targets.

- Vulnerability Pattern Recognition: Identifies recurring weakness patterns across diverse codebases and technologies.

- Exploit Crafting Assistance: Provides guidance for building effective proof-of-concept exploits.

- Report Generation: Produces clear, structured, and actionable vulnerability reports.

- Learning from History: Improves recommendations by learning from previously accepted submissions.

- Cross-Program Insights: Transfers knowledge across multiple bounty programs to uncover similar flaws elsewhere.

4.2 Code Example (Conceptual Overview)

A sample Python-based BountyAgent architecture demonstrates how LLMs can be combined with structured data models to support scope parsing, pattern recognition, and automated reporting.

Key components include:

- Data structures for vulnerabilities and scope elements

- LLM-assisted scope analysis

- Pattern recognition and scope validation

- Automated report generation

- Learning mechanisms that evolve with successful submissions

Several methods are intentionally left abstract, as their implementations depend on the chosen LLMs and detection techniques.

5 AI Agent in Vulnerability Discovery and Zero-Day Discovery

AI agents are increasingly used to identify both known vulnerabilities and zero-day flaws—issues unknown to vendors and defenders at the time of discovery.

5.1 Google’s Big Sleep Project

In October 2024, Google’s Big Sleep AI framework discovered a stack buffer underflow in SQLite before it was exploited (Google Project Zero, 2024). An evolution of Project Naptime, Big Sleep combines code comprehension with human-like reasoning to detect complex vulnerabilities that traditional fuzzing often misses.

By analyzing commits and simulating researcher workflows, Big Sleep enhances proactive vulnerability discovery and highlights AI’s growing role in defensive cybersecurity.

5.2 Designing the “DeepFuzz” Agent

A conceptual AI fuzzing agent, DeepFuzz, illustrates how modern vulnerability discovery can be enhanced using AI:

- Intelligent Input Generation using deep learning

- Adaptive Fuzzing guided by runtime feedback

- Coverage-Guided Optimization

- Symbolic Execution Integration to reach deep code paths

- Multidimensional Analysis (memory, timing, IPC)

- Automated Exploit Generation

- Continuous Learning across fuzzing sessions

A high-level architecture combines neural input generators, symbolic execution (e.g., angr), coverage tracking, vulnerability detection, exploit generation, and distributed fuzzing workers. While full implementations are complex, this design demonstrates how AI agents can significantly outperform traditional fuzzers.

6 Architectural Considerations for AI-Enhanced Offensive Platforms

Designing next-generation AI-driven offensive platforms requires careful architectural planning:

- Distributed AI Processing for scalability and low latency

- Secure Enclaves to protect AI models and training data

- Modular Integration for rapid capability upgrades

- Edge AI to reduce reliance on centralized command servers

- Federated Learning to improve models without centralizing sensitive data

- AI-Driven Resilience through self-healing and adaptation

- Quantum-Resistant Designs to prepare for future cryptographic threats

These considerations enable more adaptive and resilient offensive systems while increasing defensive complexity.

7. Summary

This chapter explored how AI agents are redefining offensive security. From red teaming and social engineering to supply chain attacks and bug bounty automation, AI is accelerating vulnerability discovery and expanding attack realism.

Key examples—such as Meta’s GOAT, Google’s AART and Big Sleep, Microsoft’s PyRIT, and agent designs like BountyAgent and DeepFuzz—demonstrate how AI enables scalable adversarial testing, adaptive fuzzing, and early zero-day discovery.

The chapter concludes by highlighting architectural trends that support AI-driven offensive platforms, emphasizing the dual-use nature of these technologies. When applied responsibly, AI-powered offensive tools can significantly improve overall security posture by exposing weaknesses before real attackers do.