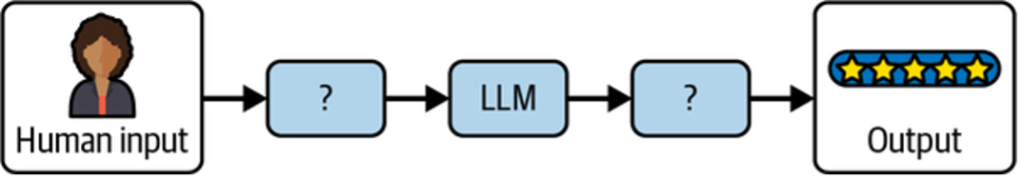

The Preface introduced the power of LLM prompting and showed how different techniques—especially when combined—can dramatically change model outputs. The core challenge in building effective LLM applications lies in crafting the right prompt and reliably processing the model’s response to produce accurate results (see Figure 1).

Solving this problem puts you on a strong path toward building both simple and complex LLM-powered systems. In this chapter, we explore how LangChain’s building blocks map to core LLM concepts and how combining them enables robust applications. First, we briefly explain why LangChain is useful.

Table of Contents

Why LangChain?

LLM applications can be built directly using a provider’s SDK (such as OpenAI’s). However, learning LangChain pays off both short- and long-term for two main reasons:

Prebuilt common patterns

LangChain includes reference implementations for common LLM patterns such as chain-of-thought and tool calling. These provide a fast starting point and are often sufficient out of the box. When they aren’t, LangChain also offers flexible lower-level components.

Interchangeable building blocks

LangChain components—LLMs, chat models, output parsers, embeddings, and vector stores—follow shared interfaces, making them easy to swap. This future-proofs applications as providers and requirements evolve.

Throughout this tutorial, examples use:

- LLM / Chat model: OpenAI

- Embeddings: OpenAI

- Vector store: PGVector

Each can be replaced with alternatives:

- Chat models: Anthropic (commercial) or Ollama (open source)

- Embeddings: Cohere (commercial) or Ollama (open source)

- Vector stores: Weaviate or OpenSearch

LangChain’s abstraction goes beyond shared method names. For example, while OpenAI and Anthropic both support chat-based APIs, their message formats differ subtly. Mixing them directly causes friction. LangChain smooths over these differences, enabling provider-agnostic applications—such as chatbots that use multiple models in the same conversation.

Finally, LangChain provides orchestration features that become essential as applications grow:

- Callback-based observability

- Consistent interfaces across components

- Support for interrupting, resuming, and retrying long-running workflows

These capabilities make LangChain a practical foundation for production-grade LLM applications.

Getting Set Up with LangChain

To follow along with this chapter and the ones ahead, first set up LangChain locally. If you haven’t already, create an OpenAI account as described in the Preface. Then generate an API key from OpenAI’s API Keys page and save it.

Python Setup

- Ensure Python is installed (see OS-specific instructions).

- (Optional) Install Jupyter to run examples in notebooks:

pip install notebook - Install LangChain and required packages:

pip install langchain langchain-openai langchain-community pip install langchain-text-splitters langchain-postgres - Set your OpenAI API key in the environment:

export OPENAI_API_KEY=your-key - Launch Jupyter:

jupyter notebook

You’re now ready to run the Python examples.

Using LLMs in LangChain

To recap, LLMs are the core engine behind most generative AI applications. LangChain provides two simple interfaces for interacting with LLM providers:

- LLMs

- Chat models

LLM Interface

The LLM interface takes a single string prompt, sends it to the provider, and returns the model’s response.

from langchain_openai.llms import OpenAI

model = OpenAI(model="gpt-3.5-turbo")

model.invoke("The sky is")

Output

Blue!

The model parameter selects the underlying LLM. Most providers offer multiple models with trade-offs in capability, cost, and latency.

Common configuration options include:

- temperature: Controls randomness. Lower values (e.g., 0.1) produce predictable output; higher values (e.g., 0.9) increase creativity.

- max_tokens: Limits output length and cost. Setting this too low may truncate responses.

Additional parameters vary by provider, so consult the relevant documentation.

Chat Model Interface

Chat models support multi-turn conversations using structured message roles:

- System: Instructions for how the model should behave

- User: User input

- Assistant: Model-generated responses

This interface is especially useful for chatbot-style applications.

from langchain_openai.chat_models import ChatOpenAI

from langchain_core.messages import HumanMessage

model = ChatOpenAI()

prompt = [HumanMessage("What is the capital of France?")]

model.invoke(prompt)

Output

AIMessage(content='The capital of France is Paris.')

Chat models use different message types:

HumanMessage(user role)AIMessage(assistant role)SystemMessage(system role)ChatMessage(custom role)

Using System Instructions

System messages allow you to control model behavior independently of the user’s question.

from langchain_openai.chat_models import ChatOpenAI

from langchain_core.messages import HumanMessage, SystemMessage

model = ChatOpenAI()

system_msg = SystemMessage(

"You are a helpful assistant that responds to questions with three exclamation marks."

)

human_msg = HumanMessage("What is the capital of France?")

model.invoke([system_msg, human_msg])

Output

AIMessage('Paris!!!')

This shows how system instructions can enforce consistent, predictable behavior across your AI application—even when users don’t explicitly request it.

Making LLM Prompts Reusable

Prompt instructions strongly influence model output. A good prompt provides context and guides the model toward relevant answers. While prompts often start as static strings, real applications require dynamic inputs such as user questions or retrieved context.

From Static to Dynamic Prompts

A detailed prompt may look like this:

Answer the question based on the context below. If the question cannot be answered using the information provided, answer with « I don’t know ».

Context: …

Question: …

Answer:

Hardcoding values limits reuse. LangChain solves this with prompt templates, which define a reusable structure and inject values at runtime.

PromptTemplate

from langchain_core.prompts import PromptTemplate

template = PromptTemplate.from_template(

"""Answer the question based on the context below. If the question cannot be

answered using the information provided, answer with "I don't know".

Context: {context}

Question: {question}

Answer:"""

)

template.invoke({

"context": """The most recent advancements in NLP are being driven by Large

Language Models (LLMs). These models outperform their smaller counterparts

and have become invaluable for developers.""",

"question": "Which model providers offer LLMs?"

})

The template defines placeholders ({context}, {question}) that are filled dynamically using Python’s f-string-style syntax.

Using a PromptTemplate with an LLM

Once defined, both the prompt template and model can be reused multiple times.

from langchain_openai.llms import OpenAI

from langchain_core.prompts import PromptTemplate

template = PromptTemplate.from_template(

"""Answer the question based on the context below. If the question cannot be

answered using the information provided, answer with "I don't know".

Context: {context}

Question: {question}

Answer:"""

)

model = OpenAI()

prompt = template.invoke({

"context": """The most recent advancements in NLP are being driven by Large

Language Models (LLMs). Developers can access them through Hugging Face,

OpenAI, and Cohere.""",

"question": "Which model providers offer LLMs?"

})

model.invoke(prompt)

Output

Hugging Face's `transformers` library, OpenAI, and Cohere offer LLMs.

ChatPromptTemplate for Conversational Apps

For chat-based applications, LangChain provides ChatPromptTemplate, which supports message roles such as system and human.

from langchain_core.prompts import ChatPromptTemplate

template = ChatPromptTemplate.from_messages([

("system", "Answer the question based on the context below. If the question cannot be answered using the information provided, answer with \"I don't know\"."),

("human", "Context: {context}"),

("human", "Question: {question}"),

])

template.invoke({

"context": """The most recent advancements in NLP are being driven by Large

Language Models (LLMs). Developers can access them through Hugging Face,

OpenAI, and Cohere.""",

"question": "Which model providers offer LLMs?"

})

This produces a structured prompt composed of a SystemMessage and multiple HumanMessage objects, while still allowing dynamic inputs.

Using ChatPromptTemplate with a Chat Model

from langchain_openai.chat_models import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

template = ChatPromptTemplate.from_messages([

("system", "Answer the question based on the context below. If the question cannot be answered using the information provided, answer with \"I don't know\"."),

("human", "Context: {context}"),

("human", "Question: {question}"),

])

model = ChatOpenAI()

prompt = template.invoke({

"context": """The most recent advancements in NLP are being driven by Large

Language Models (LLMs). Developers can access them through Hugging Face,

OpenAI, and Cohere.""",

"question": "Which model providers offer LLMs?"

})

model.invoke(prompt)

Output

AIMessage(content="Hugging Face, OpenAI, and Cohere offer LLMs.")

By separating prompt structure from runtime values, LangChain templates make prompts reusable, composable, and easier to maintain—an essential pattern for production LLM applications.

Getting Specific Formats out of LLMs

While plain text output is often sufficient, many applications require structured, machine-readable output—such as JSON—to pass LLM results directly into other parts of a system (APIs, databases, or downstream code).

JSON Output

JSON is the most common structured format used with LLMs. To generate reliable JSON, you first define a schema describing the expected output, then instruct the model to follow it.

In LangChain, this is done by pairing a schema with the model using with_structured_output.

from langchain_openai import ChatOpenAI

from langchain_core.pydantic_v1 import BaseModel

class AnswerWithJustification(BaseModel):

"""An answer to the user's question along with justification for the answer."""

answer: str

justification: str

llm = ChatOpenAI(model="gpt-3.5-turbo", temperature=0)

structured_llm = llm.with_structured_output(AnswerWithJustification)

structured_llm.invoke(

"What weighs more, a pound of bricks or a pound of feathers?"

)

Output

{

"answer": "They weigh the same",

"justification": "Both a pound of bricks and a pound of feathers weigh one pound."

}

How Structured Output Works

The schema serves two purposes:

- Guidance: LangChain converts the schema into a JSON Schema and sends it to the LLM (using function calling or prompting, depending on the model).

- Validation: The model’s response is validated against the schema before being returned, ensuring the output matches the expected structure exactly.

By enforcing schemas, LangChain makes LLM outputs predictable, safe to parse, and easy to integrate into larger applications—an essential step for production-ready systems.

Other Machine-Readable Formats with Output Parsers

Beyond JSON, LLMs can generate other structured formats such as CSV, XML, or lists. In LangChain, this is handled using output parsers—components that help turn raw model text into structured data.

Output parsers serve two main purposes:

Providing Format Instructions

Parsers can supply formatting guidance that is injected into the prompt, helping the LLM produce output in a form the parser understands.

Validating and Parsing Output

Parsers transform the model’s text output into a structured representation (for example, a list or table). This may include cleaning extra text, handling partial output, and validating values.

Example: Comma-Separated List

from langchain_core.output_parsers import CommaSeparatedListOutputParser

parser = CommaSeparatedListOutputParser()

items = parser.invoke("apple, banana, cherry")

Output

['apple', 'banana', 'cherry']

LangChain includes many built-in output parsers for common formats, including CSV, XML, and others. In the next section, these parsers are combined with prompts and models to produce fully structured, end-to-end LLM workflows.

Assembling the Many Pieces of an LLM Application

So far, you’ve seen the core building blocks of LangChain. The next question is how to combine them effectively into a full LLM application.

The Runnable Interface

All LangChain components—models, prompt templates, and output parsers—share a common Runnable interface. This is why you’ve seen the same invoke() pattern throughout the examples.

Every runnable component supports:

- invoke: transform a single input into an output

- batch: efficiently process multiple inputs

- stream: stream output incrementally as it’s generated

Additional features include built-in retries, fallbacks, schema support, runtime configuration, and asyncio equivalents in Python.

Because the interface is consistent, once you learn it for one component, it applies to all others.

from langchain_openai.llms import ChatOpenAI

model = ChatOpenAI()

# Single input → single output

completion = model.invoke("Hi there!")

# "Hi!"

# Multiple inputs → multiple outputs

completions = model.batch(["Hi there!", "Bye!"])

# ["Hi!", "See you!"]

# Streamed output

for token in model.stream("Bye!"):

print(token)

# Good

# bye

# !

In practice:

invoke()returns one complete resultbatch()returns a list of resultsstream()yields partial outputs as they become available

(or a single chunk if streaming isn’t supported)

Ways to Combine Components

LangChain supports two composition styles:

Imperative

Call components directly, such as model.invoke(...). This gives full control and uses standard Python.

Declarative

Use LangChain Expression Language (LCEL) to declaratively compose components. LCEL handles parallelism, streaming, and async execution automatically (covered in a later section).

Table 1 summarizes the differences between imperative and declarative composition:

| Feature | Imperative | Declarative (LCEL) |

|---|---|---|

| Syntax | Full Python | LCEL |

| Parallel execution | Threads / coroutines | Automatic |

| Streaming | Manual (yield) | Automatic |

| Async execution | async functions | Automatic |

With the Runnable interface as a foundation, LangChain lets you assemble prompts, models, and parsers into flexible, production-ready LLM pipelines—either step by step or declaratively.

Imperative Composition

Imperative composition is simply writing regular Python code—functions and classes—that combine prompts, models, and parsers. It offers full control and flexibility, using patterns you already know.

Basic Example

Below is a minimal chatbot built by combining a prompt template and a chat model. The @chain decorator wraps the function in LangChain’s Runnable interface, enabling invoke, batch, and stream.

from langchain_openai.chat_models import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.runnables import chain

# Building blocks

template = ChatPromptTemplate.from_messages([

("system", "You are a helpful assistant."),

("human", "{question}"),

])

model = ChatOpenAI()

# Combine components in a function

@chain

def chatbot(values):

prompt = template.invoke(values)

return model.invoke(prompt)

# Use the chatbot

chatbot.invoke({"question": "Which model providers offer LLMs?"})

Output

AIMessage(content="Hugging Face, OpenAI, and Cohere offer LLMs.")

This is a complete chatbot implemented with familiar Python syntax, making it easy to insert custom logic.

Adding Streaming Support

To stream output, simply yield tokens from the model. LangChain automatically exposes a stream() method.

@chain

def chatbot(values):

prompt = template.invoke(values)

for token in model.stream(prompt):

yield token

for part in chatbot.stream({

"question": "Which model providers offer LLMs?"

}):

print(part)

Output (streamed)

AIMessageChunk(content="Hugging")

AIMessageChunk(content=" Face's")

AIMessageChunk(content=" transformers")

...

Asynchronous Execution (Python)

For async workflows, define the function with async and use the async variants of invoke.

@chain

async def chatbot(values):

prompt = await template.ainvoke(values)

return await model.ainvoke(prompt)

await chatbot.ainvoke({

"question": "Which model providers offer LLMs?"

})

Imperative composition gives you maximum flexibility, but streaming and async behavior must be implemented manually. In the next section, declarative composition shows how LangChain can handle these concerns automatically.

Declarative Composition

LCEL (LangChain Expression Language) is a declarative way to compose LangChain components. Instead of writing glue code yourself, you describe how components connect, and LangChain compiles this into an optimized execution plan with automatic streaming, async support, tracing, and parallelization.

Basic LCEL Example

This example is functionally identical to the imperative chatbot, but uses the | operator to compose components.

from langchain_openai.chat_models import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

# Building blocks

template = ChatPromptTemplate.from_messages([

("system", "You are a helpful assistant."),

("human", "{question}"),

])

model = ChatOpenAI()

# Declarative composition

chatbot = template | model

# Use it

chatbot.invoke({"question": "Which model providers offer LLMs?"})

Output

AIMessage(content="Hugging Face, OpenAI, and Cohere offer LLMs.")

Crucially, the usage is identical to imperative composition: you still callinvoke, batch, or stream.

Streaming with LCEL (Automatic)

Unlike imperative composition, no extra code is needed to enable streaming.

for part in chatbot.stream({

"question": "Which model providers offer LLMs?"

}):

print(part)

Output (streamed)

AIMessageChunk(content="Hugging")

AIMessageChunk(content=" Face's")

AIMessageChunk(content=" transformers")

...

Async Execution with LCEL (Python)

Async support also works out of the box.

await chatbot.ainvoke({

"question": "Which model providers offer LLMs?"

})

No changes to the composition are required—LangChain handles it automatically.

Summary

In this chapter, you learned the core building blocks for LLM applications in LangChain:

- Models to generate predictions

- Prompts to guide model behavior

- Output parsers to structure results

All LangChain components share a common interface (invoke, stream, batch), making them easy to combine.

You can assemble applications in two ways:

- Imperatively, using standard Python for maximum control and custom logic

- Declaratively, using LCEL for concise pipelines with automatic streaming and async support

Imperative composition is best when you need fine-grained control. Declarative composition shines when you want clean, composable pipelines with minimal boilerplate.

In Chapter 2, you’ll learn how to inject external data as context—enabling LLM applications that can truly chat with your data.