This chapter explores how generative AI can automate and accelerate data science workflows. Large language models (LLMs) are increasingly influential in scientific progress, particularly by improving research data analysis and streamlining literature reviews. Alongside these advances, existing Automated Machine Learning (AutoML) approaches already help data scientists boost productivity and improve the repeatability of their work.

We begin by examining the impact of generative AI on data science, followed by an overview of automation in data science workflows. The chapter then demonstrates how code generation and AI tools can be used to answer data science questions—ranging from simulations to enriching datasets with external information.

Next, the focus shifts to exploratory analysis of structured data. Using agents, we show how to query datasets via SQL or pandas, ask statistical questions, and generate visualizations directly from data.

Throughout the chapter, multiple approaches to applying LLMs in data science are presented.

Table of Contents

Before diving into automation techniques, we begin by discussing how generative AI is reshaping the practice of data science.

The Impact of Generative Models on Data Science

Generative AI and large language models (LLMs) such as GPT-4 are reshaping data science by influencing every stage of the workflow. Their ability to understand and generate human-like text makes them powerful tools for boosting research productivity, accelerating analysis, and supporting decision-making.

Generative AI plays an important role in data exploration and interpretation, helping uncover patterns, correlations, and insights that may be missed by traditional methods. By automating routine analysis tasks, these models free researchers to focus on higher-level reasoning and experimentation.

LLMs also significantly improve literature reviews and research discovery. Models like ChatGPT can summarize large volumes of academic content, surface key themes, and help identify research gaps. This capability was explored in Chapter 4, Building Capable Assistants.

Additional data science use cases include:

- Synthetic data generation: Creating artificial datasets for training models when real data is scarce or sensitive.

- Pattern discovery: Identifying complex patterns not easily detectable by human analysts.

- Feature engineering: Generating new features from existing data to improve model performance.

Industry reports from organizations such as McKinsey and KPMG highlight how generative AI is changing what data scientists work on, how they work, and who can perform data science tasks. Key impacts include:

- Democratization of AI: Non-experts can generate code, text, and insights using simple prompts.

- Increased productivity: Automated generation of code and analysis accelerates workflows.

- Innovation in data science: New ways to explore data and generate hypotheses.

- Industry disruption: Automation and AI-enhanced products create new competitive dynamics.

- Persistent limitations: Accuracy, bias, and controllability issues remain.

- Governance and ethics: Strong oversight is required to ensure responsible AI use.

- Shifting skill demands: Greater emphasis on governance, ethics, and problem framing over pure coding.

Generative AI is also transforming data visualization. Instead of static, two-dimensional charts, AI-driven tools can generate interactive and even three-dimensional visualizations, making data more accessible and improving interpretation for a broader audience.

One of the most profound effects of generative AI is the democratization of data science. Tasks that once required deep expertise in statistics and machine learning are increasingly accessible to users with limited technical backgrounds, expanding participation across organizations.

In automated data science, LLMs offer several advantages:

- Natural language interaction: Users can explore data using plain language.

- Code generation: Automatic creation of SQL, pandas, or visualization code during EDA.

- Automated reporting: Generation of summaries covering statistics, correlations, and insights.

- Exploration and visualization: Automated discovery of patterns, anomalies, and relationships.

Over time, generative AI systems can adapt to user behavior, learning preferences and improving recommendations through feedback. They can also assist with intelligent error detection, identifying inconsistencies and anomalies in datasets during exploratory analysis.

Overall, LLMs enhance automated EDA by simplifying interaction, accelerating analysis, generating insights, and scaling exploration across large and complex datasets.

However, these models are not infallible. LLMs rely on pattern matching rather than true reasoning and can struggle with mathematics and factual accuracy. Their strength lies in creativity, not precision, requiring researchers to validate outputs and apply rigorous scientific judgment.

A notable real-world example is Microsoft Fabric, which integrates generative AI through a conversational interface. Users can query data in natural language and receive immediate insights, reducing reliance on traditional data request workflows. Fabric provides role-specific experiences for data engineers, analysts, scientists, and business users, with Azure OpenAI integrated throughout the platform.

Features such as Copilot in Microsoft Fabric enable users to create dataflows, generate code, build machine learning models, visualize results, and develop conversational analytics experiences.

Despite these advances, risks remain. Tools like ChatGPT and Fabric may generate incorrect SQL or analyses. While this is manageable for experienced analysts, it poses challenges for non-technical self-service users. Reliable data pipelines, strong data quality practices, and expert oversight are essential.

In summary, generative AI offers substantial promise for data analytics, research, and exploration—but its outputs must be validated through first-principles reasoning and domain expertise. These models excel at ideation, summarization, and exploratory analysis, yet should complement, not replace, rigorous analytical practice.

Automated Data Science

Data science combines computer science, statistics, and business analytics to extract insights from data and support decision-making. Typical responsibilities include data collection, cleaning, analysis, visualization, and building predictive models. While essential, these tasks are often complex and time-consuming.

Automating parts of the data science workflow allows practitioners to focus on creative problem-solving and higher-value analysis. Modern tools streamline repetitive steps, enable faster iteration, and reduce manual coding. In this respect, data science increasingly overlaps with software development—particularly in writing, maintaining, and deploying model-centric code, as discussed in Chapter 6, Developing Software with Generative AI.

Platforms such as KNIME, H2O, and RapidMiner provide end-to-end analytics engines for data preprocessing, feature extraction, and model building. When combined with LLM-powered tools like GitHub Copilot or Jupyter AI, these platforms enable code generation for data processing, analysis, and visualization directly from natural language prompts. Jupyter AI, for example, allows users to converse with a virtual assistant to explain code, detect errors, and generate notebooks.

Figure 1 shows the Jupyternaut chat interface in Jupyter AI, illustrating how an embedded conversational assistant can support everyday data science tasks.

Having an interactive chat interface readily available to ask questions, create functions, or modify existing code can significantly enhance data scientist productivity.

Overall, automated data science accelerates analytics and machine learning development, enabling practitioners to focus on insight generation rather than manual implementation. A key goal is also the democratization of data science, allowing business analysts and non-specialists to engage more directly with data. In the following sections, we explore how generative AI improves efficiency across core tasks, including data collection, visualization and EDA, preprocessing and feature engineering, and AutoML.

Data Collection

Automated data collection refers to gathering data with minimal or no human intervention. For organizations, this enables faster, more reliable data acquisition while freeing human resources for higher-value tasks.

In data science, data collection is commonly part of ETL (Extract, Transform, Load) pipelines, which not only ingest data from multiple sources but also prepare it for downstream analytics and modeling.

A wide range of ETL tools is available. Commercial options include AWS Glue, Google Dataflow, dbt, Fivetran, Microsoft SSIS, Talend, and others, while open-source solutions include Airflow, Kafka, and Spark. In Python ecosystems, tools such as pandas, along with orchestration frameworks like Celery and joblib, are frequently used.

LangChain integrates with Zapier, enabling automation across applications and services for data collection. Combined with LLMs, these tools accelerate data ingestion and processing, particularly for organizing and extracting value from unstructured data.

Selecting the right data collection tool depends on factors such as data type, volume, integration complexity, and budget.

Visualization and Exploratory Data Analysis (EDA)

EDA involves exploring and summarizing datasets to identify patterns, inconsistencies, and assumptions before modeling. As datasets grow larger and more complex, automated EDA has become increasingly important.

Automated EDA and visualization tools analyze datasets with minimal manual intervention, speeding up tasks such as cleaning, missing-value handling, outlier detection, and feature exploration. They also generate interactive visualizations that provide a comprehensive view of complex data.

Generative AI further enhances automated EDA by creating visualizations directly from user prompts, making data exploration and interpretation more accessible to a broader audience.

Preprocessing and Feature Extraction

Automated preprocessing includes data cleaning, integration, transformation, and feature extraction—closely aligned with the transform phase of ETL. Automated feature engineering is increasingly critical for extracting maximum value from real-world data.

LLMs can automate preprocessing workflows by cleaning, transforming, and integrating data. This automation can reduce human handling of sensitive information, supporting improved privacy management. However, automatically generated features may lack transparency, raising concerns around interpretability, bias, and error propagation. Efficiency gains must therefore be balanced with robust validation and governance.

AutoML

AutoML frameworks represent a major advancement in machine learning by automating the end-to-end model lifecycle, including data preparation, feature selection, model training, and hyperparameter tuning. These frameworks significantly reduce development time while often improving model quality.

Figure 2 illustrates the core idea behind AutoML using a diagram from the mljar AutoML library.

AutoML systems enhance usability and productivity by enabling rapid experimentation and deployment within standard development environments. Early frameworks such as Auto-WEKA paved the way for today’s diverse ecosystem, which includes auto-sklearn, AutoKeras, Auto-PyTorch, TPOT, Optuna, AutoGluon, Ray Tune, and others.

Modern AutoML platforms—such as Google AutoML, Azure AutoML, and H2O—support both structured and unstructured data, including images, audio, and video. Many perform extensive hyperparameter searches that rival or exceed manual tuning. Tools like PyCaret simplify training multiple models with minimal code, while time-series–focused frameworks such as StatsForecast and MLForecast address specialized use cases.

Key characteristics of AutoML frameworks include:

- Deployment support, particularly in cloud environments

- Broad data type coverage, with strong support for tabular data

- Explainability features, critical for regulated domains

- Post-deployment monitoring to ensure ongoing model performance

Despite their advantages, AutoML systems often function as black boxes, making debugging and interpretability challenging. While they democratize machine learning, they may struggle with highly complex or domain-specific tasks.

The integration of LLMs into AutoML revitalizes the space by improving automation in feature selection, model tuning, and experimentation. LLM-powered AutoML can also generate synthetic data, reducing reliance on sensitive datasets. However, safety mechanisms and validation remain essential to prevent error propagation and bias amplification.

Although LLM-enhanced AutoML simplifies model development, users must still make informed decisions about evaluation, deployment, and governance.

Using Agents to Answer Data Science Questions

Tools such as LLMMathChain can be used to execute Python-backed computations for answering numerical queries. We’ve already seen several agent-and-tool combinations, and this is a simple illustration of chaining an LLM with a mathematical tool to compute results programmatically:

from langchain import OpenAI, LLMMathChain

llm = OpenAI(temperature=0)

llm_math = LLMMathChain.from_llm(llm, verbose=True)

llm_math.run(

"What is 2 raised to the 10th power?"

)

This produces output similar to the following:

> Entering new LLMMathChain chain...

What is 2 raised to the 10th power?

2**10

numexpr.evaluate("2**10")

Answer: 1024

> Finished chain.

[2]:'Answer: 1024'

While tools like LLMMathChain excel at deterministic computations, they are less directly applicable to standard EDA workflows. Other chains—such as PALChain and CPALChain—aim to improve reasoning reliability and reduce hallucinations, but their real-world adoption remains limited.

Using PythonREPLTool, agents can execute arbitrary Python code, making it possible to create visualizations, generate synthetic data, or prototype models. The following example from the LangChain documentation demonstrates building and training a simple neural network using PyTorch:

from langchain.agents.agent_toolkits import create_python_agent

from langchain.tools.python.tool import PythonREPLTool

from langchain.llms.openai import OpenAI

from langchain.agents.agent_types import AgentType

agent_executor = create_python_agent(

llm=OpenAI(temperature=0, max_tokens=1000),

tool=PythonREPLTool(),

verbose=True,

agent_type=AgentType.ZERO_SHOT_REACT_DESCRIPTION,

)

agent_executor.run(

"""Understand, write a single neuron neural network in PyTorch.

Take synthetic data for y=2x. Train for 1000 epochs and print every 100 epochs.

Return prediction for x = 5"""

)

The agent executes the full training loop locally and returns a prediction. Verbose logs show loss decreasing over training epochs until convergence:

Final Answer: The prediction for x = 5 is y = 10.00.

This example illustrates how agents can generate, execute, and iterate on code autonomously. However, running unrestricted Python code introduces security risks and would require safeguards in production systems. Scaling such approaches also demands more robust engineering than these illustrative examples suggest.

Agents can also be used for data enrichment by combining LLMs with external tools. For example, to compute distances between cities using WolframAlpha, the following setup can be used:

from langchain.agents import load_tools, initialize_agent

from langchain.llms import OpenAI

from langchain.chains.conversation.memory import ConversationBufferMemory

llm = OpenAI(temperature=0)

tools = load_tools(['wolfram-alpha'])

memory = ConversationBufferMemory(memory_key="chat_history")

agent = initialize_agent(

tools,

llm,

agent="conversational-react-description",

memory=memory,

verbose=True

)

agent.run(

"""How far are these cities to Tokyo?

* New York City

* Madrid, Spain

* Berlin

"""

)

After setting the required OPENAI_API_KEY and WOLFRAM_ALPHA_APPID environment variables, the agent returns distances for each city relative to Tokyo.

By integrating LLMs with tools like WolframAlpha, agents can perform practical enrichment tasks—such as geographic calculations—that enhance the value of business datasets. While these examples focus on relatively simple queries, applying such techniques at scale requires more comprehensive tool orchestration, validation, and governance.

Crucially, agents can also be given structured datasets to work with. When combined with additional tools, this unlocks far more powerful workflows—allowing agents to answer complex analytical questions directly over data, which we explore next.

Data Exploration with LLMs

Data exploration is a foundational step in data analysis, enabling researchers to understand datasets and uncover meaningful insights. With the emergence of LLMs such as ChatGPT, natural language interfaces can now significantly simplify and accelerate this process.

As discussed earlier, generative AI models excel at understanding and producing human-like responses. Asking questions in plain language and receiving concise, structured answers lowers the barrier to exploration and boosts analytical productivity.

LLMs can assist with exploring textual, numerical, and even multimedia data. Researchers can query statistical properties of numerical datasets, explore trends, or reason about visual outputs in image-based tasks.

To illustrate this, let’s load a dataset from scikit-learn:

from sklearn.datasets import load_iris

df = load_iris(as_frame=True)["data"]

The Iris dataset is a well-known toy dataset that serves as a convenient example. We can now create a pandas DataFrame agent to interact with this data using natural language:

from langchain.agents import create_pandas_dataframe_agent

from langchain import PromptTemplate

from langchain.llms.openai import OpenAI

PROMPT = (

"If you do not know the answer, say you don't know.\n"

"Think step by step.\n\n"

"Below is the query.\n"

"Query: {query}\n"

)

prompt = PromptTemplate(template=PROMPT, input_variables=["query"])

llm = OpenAI()

agent = create_pandas_dataframe_agent(llm, df, verbose=True)

The prompt explicitly encourages uncertainty handling and step-by-step reasoning to reduce hallucinations. We can now query the dataset directly:

agent.run(prompt.format(query="What's this dataset about?"))

The agent correctly explains that the dataset contains measurements of flowers.

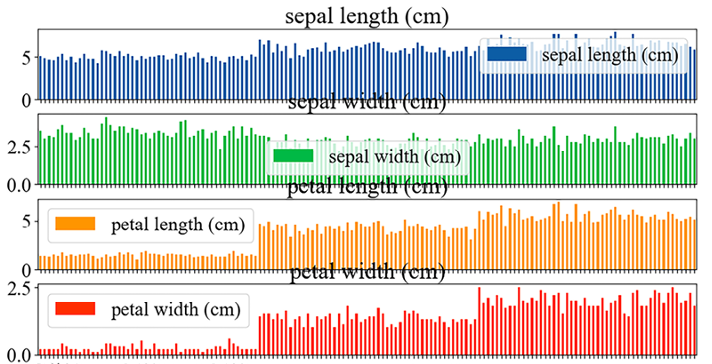

We can also request visualizations:

agent.run(prompt.format(query="Plot each column as a barplot!"))

This produces the following visualization:

The resulting plot may require refinement, as outputs depend on the model and instructions. In this case, df.plot.bar(rot=0, subplots=True) was used. Additional tuning—such as spacing, fonts, or legends—could improve presentation quality.

Requesting distribution plots produces another useful visualization:

Other plotting backends, such as Seaborn, can also be requested if installed.

Beyond visualization, we can ask analytical questions. For example, identifying the row with the largest difference between petal length and petal width yields an answer along with intermediate reasoning steps, culminating in:

Final Answer: Row 122 has the largest difference between petal length and petal width.

This showcases the agent’s ability to derive new features and reason over the data.

LLMs can also perform statistical testing through natural language prompts:

agent.run(prompt.format(

query="Validate the following hypothesis statistically: petal width and petal length come from the same distribution."

))

The agent applies a Kolmogorov–Smirnov test and correctly concludes—based on a very small p-value—that the two variables come from different distributions.

Alternative libraries such as PandasAI, which builds on LangChain, provide similar functionality. The following example demonstrates querying a dataset conversationally:

print(smart_df.chat("Which are the 5 happiest countries?"))

Note that PandasAI is not included in the tutorial setup and must be installed separately.

For SQL-based data, LangChain provides SQLDatabaseChain, enabling natural language queries over databases:

db_chain.run("How many employees are there?")

This approach is especially useful when database schemas are unfamiliar. The chain can generate, validate, and even autocorrect SQL queries when configured appropriately.

Overall, these examples demonstrate how LLM-powered agents can transform data exploration. By enabling natural language queries over DataFrames and databases, they simplify EDA, automate visualization and statistical testing, and lower the barrier to insight generation—while still requiring careful validation and human oversight.

Summary

This chapter examined AutoML frameworks, highlighting their role in streamlining the full data science pipeline—from data prep to model deployment. We then explored how integrating LLMs boosts productivity and makes data science more accessible to both technical and non-technical users.

In code generation, we saw parallels with software development (Chapter 6, Developing Software with Generative AI), where LLMs create functions, respond to queries, or augment datasets, including via third-party tools like WolframAlpha.

Next, we focused on LLMs for data exploration, extending techniques from Chapter 4 (Building Capable Assistants) on processing large text corpora. Here, the attention shifted to structured data, such as SQL databases and tabular datasets, demonstrating how LLMs can drive exploratory analysis.

Overall, AI platforms like ChatGPT plugins and Microsoft Fabric show transformative potential in data analysis. While these tools enhance data scientists’ work, they currently augment rather than replace human expertise.

The next chapter will cover conditioning techniques to boost LLM performance through prompting and fine-tuning.