So far, this tutorial has explored generative models in real-world applications — from large language models and image generation to agents, tool use, retrieval-augmented generation, prompting, and fine-tuning. We’ve also built simple applications for developers and data scientists.

This chapter looks ahead.

AI progress has accelerated rapidly, with breakthroughs like DALL·E, Midjourney, and ChatGPT delivering human-level creative output — from photorealistic images to code and conversation. Investment in generative AI surged in 2022, rivaling the previous five years combined, while companies like Salesforce and Accenture committed billions to AI initiatives. Customizing foundation models for specific use cases is emerging as a major value driver, though it remains unclear whether big tech, startups, or model creators will capture most of the gains.

Technically, many generative models remain black boxes, with limited interpretability and potential bias from training data. Their heavy computational demands also create barriers for organizations lacking infrastructure.

On the upside, AI democratizes creativity — enabling high-quality output in writing, design, and analysis — while reducing costs and speeding workflows. On the downside, automation threatens specialized roles such as designers, lawyers, and doctors, while misuse risks include deepfakes, scams, propaganda, and weaponization.

Table of Contents

The path forward requires balancing innovation with responsibility — addressing risks through transparency, policy, and human-centered design to ensure AI benefits society broadly.

We begin with the current state of generative models and their capabilities.

Current State of Generative AI

In recent years, generative AI has достигed major milestones in producing human-like content across text, images, audio, and video. Leading models such as OpenAI’s GPT-4 and DALL·E 2, Google’s Imagen and Parti, and Anthropic’s Claude demonstrate remarkable language fluency and creative visual output.

Between 2022 and 2023, progress accelerated dramatically. Where models once produced incoherent text or grainy images, they now generate high-quality prose, realistic images, 3D models, and videos — often rivaling human creativity. Powered by massive datasets and computational scale, these systems can translate, summarize, answer questions, synthesize knowledge, and even describe images. Their outputs increasingly resemble human ingenuity: writing poetry, creating art, and blending information from diverse sources.

However, these strengths come with clear limitations. Generative models frequently hallucinate — producing confident but incorrect information — due to pattern learning rather than true understanding. They struggle with mathematical, logical, and causal reasoning, and can be confused by complex inference. Their black-box nature limits transparency and control, making debugging difficult. In addition, biases embedded in training data can be reflected and amplified, raising serious ethical concerns.

Table: Strengths and Deficiencies of Current LLMs

| Strengths of LLMs | Deficiencies of LLMs |

|---|---|

| Language Fluency – Coherent, contextual text and dialogue; GPT-4 produces human-level prose | Factual Accuracy – Hallucinations and lack of grounding in reality |

| Knowledge Synthesis – Aggregates and summarizes information across sources | Logical Reasoning – Weak at math, causality, and complex inference |

| Creative Output – Original text, art, and music (e.g., Claude’s poetry, DALL·E’s images) | Controllability – Difficult to constrain behavior; risk of harmful outputs |

| Bias – Can propagate societal biases from training data | |

| Transparency – Limited explainability (“black box” problem) |

While generative AI has advanced rapidly, these weaknesses must be addressed for reliable real-world deployment. Still, the technology’s transformative potential remains enormous if developed and governed responsibly. The next section explores the technical challenges arising from these limitations.

Technical Challenges

Despite rapid progress, major technical hurdles remain before generative AI can be deployed safely, reliably, and at scale. Many of these challenges — introduced in earlier chapters — center on quality, control, reasoning, transparency, privacy, and efficiency.

Table: Technical Challenges and Potential Solutions

| Challenge | Description | Potential Solutions |

|---|---|---|

| Realistic & Diverse Content Generation | Models struggle with logical consistency, factual plausibility, and nuanced output; results can be repetitive and bland | Reinforcement learning from human feedback (RLHF), data augmentation, synthesis techniques, modular domain knowledge |

| Output Quality Control | Limited ability to constrain generated content; occasional harmful, biased, or nonsensical outputs | Constrained optimization, moderation systems, real-time correction techniques |

| Avoiding Bias | Models amplify societal biases embedded in training data | Balanced datasets, bias mitigation algorithms, continuous audits |

| Factual Accuracy | Weak grounding in objective truths and common sense | Knowledge bases, hybrid neuro-symbolic models, retrieval-augmented generation |

| Explainability | Black-box behavior limits debugging and trust | Model introspection, concept attribution, simplified architectures |

| Data Privacy | Risks around consent, misuse, and sensitive data | Differential privacy, secure computation, synthetic data, federated learning |

| Latency & Compute | Large models demand heavy resources, limiting real-time use | Model distillation, optimized inference, AI hardware accelerators |

| Data Licensing | Complex legal and cost barriers for training datasets | Open-source data, synthetic data generation |

One core limitation is content realism and diversity. While models generate fluent language and creative output, they often lack logical coherence and human nuance. Techniques like RLHF, controlled data synthesis, and domain-aware architectures aim to improve quality.

Another challenge is output control. Current systems cannot consistently prevent harmful or nonsensical responses, making moderation and real-time correction essential.

Bias remains a persistent issue, as models inherit prejudices from training data. Fairer datasets, mitigation algorithms, and continuous auditing help reduce these risks.

Factual reasoning is also limited. Models struggle with objective truth and real-world grounding, prompting interest in hybrid symbolic systems, knowledge integration, and retrieval-based methods.

Explainability is hindered by black-box neural networks, complicating trust and debugging. Research into interpretable models and attribution techniques seeks to address this.

Privacy concerns grow as datasets scale, requiring solutions such as differential privacy, federated learning, and synthetic data.

Finally, massive compute demands introduce latency and cost barriers. Model compression, efficient inference, and specialized hardware are critical for real-time applications.

As these challenges are addressed, generative AI will become increasingly capable and dependable. Next, we explore emerging future capabilities.

Possible Future Capabilities

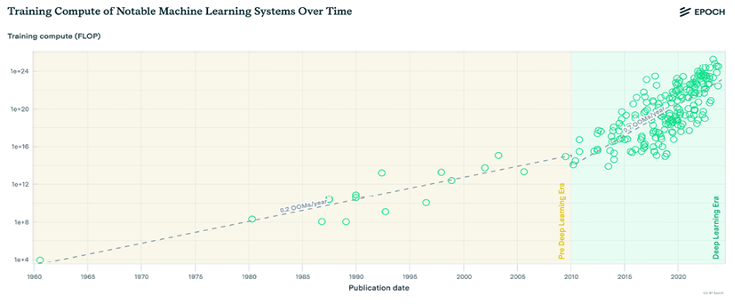

Training compute for large AI models is currently doubling roughly every eight months — far faster than Moore’s Law or hardware cost reductions.

As compute and parameter counts grow, scaling laws suggest performance rises predictably with larger models, bigger datasets, and higher training budgets. The Kaplan (KM) scaling law highlights power-law relationships between model size, data, and compute, while DeepMind’s Chinchilla law identifies optimal tradeoffs between model size and data volume for a fixed compute budget. Together, these trends imply that increasingly powerful systems could remain concentrated among resource-rich tech firms.

However, future progress is unlikely to rely on size alone. Energy limits and costs constrain indefinite scaling, while research increasingly shows that smaller, high-quality models can perform remarkably well. Examples such as phi-1 demonstrate that improved data quality can dramatically reshape scaling outcomes, and other studies show substantial model compression with minimal accuracy loss. This points toward a future where massive foundation models coexist with efficient, specialized systems.

Techniques like transfer learning, distillation, prompting, and tool integration (search engines, calculators, multi-step agents) allow smaller models to leverage large-model intelligence without incurring massive costs.

Training costs are also falling rapidly — estimates suggest around 70% per year — enabling broader experimentation. Innovation is shifting from raw scale toward better data, training regimes, and architectures.

Toward Democratized AI

Over the next 3–5 years, declining compute costs and wider AI expertise could allow many organizations to train customized models tailored to privacy, personalization, and domain needs — reducing dependence on centralized providers.

That said, some capabilities such as in-context learning appear only in very large models, fueling speculation about artificial general intelligence (AGI). Yet neuroscience and current AI research suggest such fears are exaggerated:

- Lack of embodied learning: Models are trained mainly on text, not real-world sensory experience

- Non-biological architecture: Transformers lack the complex structures of human brains

- Narrow intelligence: Weak in causal reasoning, planning, and general problem solving

- No intent or social cognition: No goals beyond training objectives

- Limited real-world grounding: Knowledge remains shallow compared to humans

- Pattern-based learning: Struggles with true generalization

These limitations make near-term superintelligent AI highly unlikely. While long-term safety research is wise, claims of imminent AGI lack strong scientific support.

Broader Implications

Still, the speed of progress raises concerns around job displacement — especially in cognitive professions previously considered safe from automation. Managing this transition equitably will be critical. Philosophical debates also emerge around AI-generated art and creativity, long viewed as uniquely human.

Next, we turn to the broader societal impacts of generative AI.

Societal Implications

Highly capable generative AI is poised to reshape society across technology, business, and human interaction. While these models unlock major opportunities, their widespread adoption also raises ethical, economic, and legal concerns that must be addressed alongside innovation.

Benefits and Economic Transformation

Generative AI can significantly boost creativity, productivity, and access to services such as healthcare, education, and finance. By democratizing knowledge and automating routine tasks, these systems help individuals learn faster, make better decisions, and focus on higher-value work. Virtual assistants, content generation, and intelligent decision support already illustrate this shift.

Economically, productivity gains will likely disrupt existing job categories while creating new roles centered on AI development, oversight, and integration. As model performance improves and costs decline, adoption will accelerate across industries.

A reinforcing cost curve further amplifies this expansion. According to Wright’s Law, costs tend to fall by 10–30% each time cumulative production doubles, driven by learning effects, process optimization, and tool reuse. In AI, this creates a feedback loop: lower costs fuel wider adoption, which in turn drives further efficiency and innovation.

Industrial and Creative Impact

Across industries, generative AI is transforming workflows:

- Content creation: rapid drafting for marketing, journalism, and media

- Software development: automated code generation and faster iteration

- Research: accelerated literature synthesis and discovery

- Personalization: hyper-targeted recommendations and services

From design to supply chains, productivity gains are likely to be substantial.

Centralized vs. Customized Models

Two parallel adoption paths are emerging:

- Centralized foundation models offered by large tech firms with massive compute and expertise

- Customized in-house models trained on proprietary data for privacy and specialization

While bespoke models offer personalization and data control, they currently face high costs, talent shortages, and privacy risks. Large centralized systems retain advantages in generalization and scale, but as tools improve and costs fall, in-house and community-level AI may become increasingly viable.

The long-term balance will depend on how fast compute becomes cheaper, skills spread, and development tools simplify. Platform dominance could maintain concentration — but distributed innovation may ultimately unlock broader societal value.

Legal, Cultural, and Ethical Questions

Generative AI also intersects with remix culture — creating new works by recombining existing content at massive scale. While this enables unprecedented creativity, it raises difficult issues around:

- Copyright and ownership

- Attribution and royalties

- Detecting AI-generated content

Current tools struggle to reliably identify AI-authored material, challenging traditional notions of authorship and intellectual property.

Generative AI’s societal impact will extend far beyond efficiency gains — reshaping labor markets, creativity, legal frameworks, and access to knowledge. Next, we explore its near-term influence across specific domains, beginning with creative industries.

Creative Industries and Advertising

Generative AI is rapidly transforming gaming, entertainment, media, and advertising by enabling immersive experiences and large-scale personalized content. By automating creative tasks, these tools boost efficiency while opening new revenue streams across art, music, literature, and digital media. However, broad deployment requires strong quality control to prevent inaccuracies and bias.

Media, Film, and Journalism

AI-generated content (AIGC) is reshaping news production and storytelling. Automated article generation allows journalists to focus on investigative work, while outlets like the Associated Press produce thousands of data-driven stories annually. Tools such as the LA Times’ Quakebot generate real-time breaking news reports, and Bloomberg’s chatbots deliver personalized summaries.

AI is also entering broadcast media through virtual news anchors like Xinhuaiver Xinhua’s Xin Xiaowei, and in film production through AI-assisted screenwriting, deepfake character recreation, automated subtitling, voiceovers, color grading, and editing tools such as Colourlab.Ai and Descript.

Advertising and Marketing

Generative AI enables hyper-personalized advertising at scale. Platforms like CAS and SGS-PAC automatically generate tailored ad copy based on user data. Creative tools such as Vinci and Brandmark.io design posters and logos, while GANs automate product listings and synthetic ad production for rapid campaign deployment.

Ethical data use and human oversight remain essential as personalization increases.

Music, Art, and Animation

In music, tools like Google Magenta, AIVA, and LANDR generate compositions and perform AI-based mastering. In visual arts, platforms such as MidJourney, DeepDream, and GAN-based systems create original imagery and restore damaged artworks. Animation tools including Adobe Character Animator and diffusion controls like ControlNet allow non-professionals to produce sophisticated motion content.

Across creative fields, AI expands artistic possibilities through both generation and data-driven insights — while raising ongoing challenges around authorship, attribution, and bias.

Economic Impact

Generative AI is expected to significantly boost productivity while reshaping labor markets. Studies suggest 30–50% of work activities could be automated by 2030–2060, potentially adding $6–8 trillion annually to global productivity by 2030.

Research indicates:

- ~80% of U.S. workers may see at least 10% of tasks affected by LLMs

- ~19% could have over half their tasks impacted

- With AI-powered tools, 47–56% of tasks could be completed significantly faster

Investment in generative AI is currently concentrated in North America, with U.S.-based companies raising roughly $8 billion (2020–2022) — about 75% of global investment.

Automation is likely to occur faster in higher-wage economies, but it will primarily reshape tasks rather than eliminate entire occupations. Productivity growth, which has slowed in recent years, could receive a sustained boost — potentially adding 0.2% to 3.3% annually through 2040 — provided displaced workers transition to equally productive roles.

Education

Personalized AI tutors and mentors could democratize access to high-demand skills in an AI-driven economy. Already, generative AI tools like ChatGPT support customized lessons, automated feedback on assignments, and interactive simulations tailored to individual learning styles. By offloading repetitive teaching tasks, educators can focus on deeper skill development.

However, risks remain. AI systems may perpetuate bias, spread misinformation, and widen inequality if only well-funded schools can access advanced tools. As knowledge evolves rapidly, education should increasingly prioritize curiosity, critical thinking, and learning strategies rather than static content.

If deployed equitably and responsibly, AI-powered education could make high-quality, personalized learning accessible to anyone — while addressing challenges around access, bias, and student social development.

Jobs and the Workforce

Generative AI is set to automate a large share of cognitive tasks over the next decade, reshaping labor markets rather than eliminating most occupations outright. Productivity gains could be enormous, but workforce disruption is inevitable.

Studies suggest:

- 30–50% of work activities may be automated by 2030–2060 (McKinsey)

- 300 million full-time jobs could be affected globally (Goldman Sachs)

- By the mid-2030s, up to 30% of jobs may be automatable (PwC)

The biggest impacts will fall on knowledge work — communication, reporting, analysis, coding, and creative tasks.

Likely Near-Term Shifts

- Junior developers augmented or replaced by AI coding assistants

- Customer service increasingly handled by conversational AI

- Journalists and technical writers supported by content generation

- Paralegals using AI for document review and drafting

- Designers empowered — but facing wage pressure — through image generation

Meanwhile, demand will grow for:

- Senior engineers building AI systems

- Data professionals validating and governing AI outputs

- AI security, ethics, policy, and compliance roles

- New positions such as prompt engineers and AI workflow designers

Task Automation, Not Total Job Loss

AI will primarily automate activities within roles rather than entire professions. Less-skilled workers may perform more complex tasks with AI support, while some specialized junior roles may shrink. For example, legal drafting and routine analysis are increasingly handled by AI-powered tools, shifting human effort toward strategy, oversight, and judgment.

Knowledge work — once considered resistant to automation — is now among the most affected sectors. Higher-wage jobs may face greater exposure than past automation waves.

Managing the Transition

Large-scale task automation will require:

- Reskilling and lifelong learning programs

- Workforce mobility and job-creation incentives

- Updated labor policies and portable benefits

If productivity gains are reinvested wisely, generative AI could fuel long-term growth and new industries while freeing people from repetitive cognitive labor. But without careful policy and training, inequality and displacement risks will rise.

Law

Generative AI is automating routine legal work such as contract review, document drafting, brief preparation, and large-scale legal research. Models can also translate complex legal language into plain terms and help analyze case outcomes.

When deployed responsibly, these tools can significantly boost productivity and expand access to legal services. However, transparency, fairness, accountability, and reliability remain essential to prevent harmful or biased outcomes.

Manufacturing and Robotics

In manufacturing — particularly automotive — generative AI is used to create 3D simulation environments, train autonomous vehicles with synthetic data, and optimize design workflows.

Beyond simulation, AI systems increasingly interpret physical environments, understand human intent through dialogue, generate natural language responses, and produce manipulation plans that help robots collaborate with humans in real-world tasks.

Medicine and Genomics

Breakthrough generative models capable of predicting physical and biological properties from gene sequences could transform medicine. Potential impacts include:

- Faster drug discovery and precision treatments

- Earlier disease detection and prevention

- Deeper understanding of complex conditions

- Improved gene therapies

Neural network advances are already improving long-read DNA sequencing accuracy, pushing genome analysis toward affordability at scale. As sequencing costs fall below $1,000 per genome, large gene-to-expression models may soon become feasible.

These developments also raise ethical concerns around genetic engineering, privacy, and inequality that demand careful governance.

Military and Autonomous Weapons

Militaries worldwide are investing in lethal autonomous weapons systems (LAWS) — drones and robots capable of selecting and engaging targets without human oversight.

While machines can process data faster and without emotion, delegating life-and-death decisions to AI crosses a serious ethical line. War involves complex human judgment around proportionality, civilian protection, and accountability — areas where AI remains dangerously limited.

Fully autonomous weapons risk violating international law, empowering authoritarian regimes, and creating unpredictable, uncontrollable escalation.

Misinformation and Cybersecurity

AI is both a defense tool and a major threat in information warfare. While it can help detect fraud and false content at scale, it also enables:

- Highly personalized propaganda and psychological targeting

- Deepfake audio and video for reputation damage

- Automated hacking and social engineering

- Rapid spread of conspiracy theories and political manipulation

During events like the COVID-19 pandemic, AI-fueled misinformation demonstrated how quickly false narratives can shape public behavior. State and non-state actors are already weaponizing generative tools for influence campaigns and cybercrime.

There is also growing concern over centralized AI platforms acting as information gatekeepers, shaping what people see and believe.

Strong governance, transparency, and digital literacy will be critical to building resilience against these emerging risks.

Practical Implementation Challenges

Unlocking the full potential of generative AI requires addressing major legal, ethical, and regulatory hurdles to ensure responsible deployment.

Key Areas of Concern

Legal:

Copyright remains unclear for AI-generated content. Who owns outputs — model creators, data contributors, or users? Training on copyrighted material also raises unresolved fair-use questions.

Data Protection:

Training large models demands massive datasets, increasing risks around privacy, consent, security, and misuse. Strong governance frameworks for anonymization and access control are essential.

Oversight and Regulation:

Governments are calling for safeguards around fairness, accuracy, and accountability. The challenge is crafting flexible policies that reduce harm without stifling innovation.

Ethics by Design:

Embedding transparency, explainability, and human oversight into AI systems helps build trust and prevent harmful outcomes.

Transparency, Bias, and Governance

Public demand for algorithmic transparency is growing, though companies often resist disclosing proprietary systems. Open-source models and emerging regulations — particularly in the EU — are pushing toward more accountable AI use.

Bias remains a persistent risk, with real-world consequences for affected groups. Integrating ethics training into technical education and building bias prevention directly into development processes can reduce these harms. However, without regulatory pressure, adoption is often slow.

European initiatives such as proposed harmonized AI regulations aim to enforce ethical standards for language, imagery, and automated decision-making.

The Misinformation Dilemma

Existing laws sometimes struggle to balance enforcement with feasibility. For example, rapid takedown requirements for fake news can overwhelm smaller platforms and risk over-censorship. Granting private companies sole authority to define truth also raises concerns around transparency and due process.

More nuanced, collaborative approaches are needed — involving governments, civil society, academia, and industry — to counter misinformation while protecting free expression and innovation.

Ultimately, proactive cooperation across sectors is essential to resolve unresolved questions of ownership, ethics, and accountability. With thoughtful guardrails in place, generative AI can deliver immense value while minimizing societal harm — guided always by the public interest.

The Road Ahead

The coming era of generative AI presents extraordinary opportunities alongside profound uncertainty. As explored throughout this tutorial, recent years have delivered remarkable breakthroughs, yet persistent challenges remain — particularly around accuracy, reasoning, controllability, and embedded bias. While claims of near-term superintelligence may be exaggerated, long-term trends suggest increasingly sophisticated capabilities will emerge over the coming decades.

At the individual level, the explosion of generative content raises serious concerns about misinformation, academic plagiarism, digital impersonation, and the erosion of trust online. As AI becomes increasingly adept at mimicking human expression, distinguishing human-generated from machine-generated content will grow more difficult, enabling new forms of deception. There is also growing anxiety that endlessly personalized content could intensify social media addiction and digital dependency.

From a societal standpoint, the rapid pace of progress fuels fears of workforce disruption and widening economic inequality. Unlike previous waves of automation that primarily affected physical labor, generative AI threatens cognitive and creative roles once considered secure. Managing this transition fairly will require proactive reskilling strategies, social safety nets, and thoughtful labor policy. At a deeper level, philosophical questions emerge around whether machines should be producing art, literature, and music — domains historically tied to human experience and meaning.

For corporations, governance frameworks for responsible use remain underdeveloped. Generative models magnify risks ranging from deepfake misinformation to unsafe medical or legal outputs. Intellectual property and content licensing questions remain unresolved, while ensuring quality control and bias mitigation introduces new operational costs. Although large technology firms currently dominate research and infrastructure, smaller organizations may ultimately benefit significantly as costs for computing, storage, and AI expertise decline.

As generative AI becomes more accessible, small and mid-sized enterprises could train specialized models tailored to niche datasets and domain-specific needs — potentially outperforming broad, general-purpose systems. Startups and non-profits often excel at rapid iteration in focused problem spaces. However, ease of development also raises concerns about market saturation and unclear value capture. While customization may drive differentiation, the long-term winners and business models remain uncertain.

Investor enthusiasm following the 2021 AI boom has cooled amid valuation corrections and increased scrutiny. The earlier surge — fueled by massive funding in machine learning, robotics, and language technologies — gave way to a market reset in 2022 as expectations outpaced reality. This cycle mirrors patterns seen in previous technological waves such as the dot-com era and blockchain. While hype has tempered, long-term structural impact remains highly plausible.

Looking further ahead, the most profound challenges may be ethical rather than technical. As AI systems assume greater responsibility in consequential decisions, alignment with human values becomes paramount. Beyond improving accuracy and reasoning, priorities must include robustness, transparency, interpretability, and sustained human oversight. Diversity in development teams and data sources is essential to reduce bias and ensure inclusive outcomes.

Policymakers will need to balance innovation with safeguards — preventing misuse while supporting workers through transitions driven by automation. With thoughtful governance, generative AI has the potential to accelerate economic growth, expand creativity, and increase access to knowledge and services across society.

Several guiding principles may shape a responsible path forward:

The Dynamics of Progress

The pace of transformation must be carefully calibrated. Excessive speed risks social disruption, while overregulation may stifle innovation. Broad public dialogue should help determine acceptable trajectories.

Human–AI Symbiosis

Rather than pursuing full automation, the most beneficial systems will augment human creativity and judgment with AI efficiency, preserving oversight and accountability.

Access and Inclusion

Equitable access to education, tools, and opportunities is crucial to prevent the amplification of existing inequalities. Representation and diversity must remain central priorities.

Risk Management and Foresight

Continuous interdisciplinary evaluation of emerging capabilities is necessary to anticipate unintended consequences — without allowing fear to halt progress.

Democratic Governance

Collaborative, transparent decision-making across governments, industry, academia, and civil society will prove more effective than unilateral control by any single actor.

While future capabilities remain uncertain, proactive governance and democratized access will be essential in steering generative AI toward equitable and socially beneficial outcomes. By aligning innovation with shared human values — emphasizing transparency, accountability, and ethical responsibility — society can ensure that these technologies serve to empower human potential rather than merely accelerate technological change.